Orchestration

Multi-Agent Orchestration and State Management

Skill 1 of 9 | Pillar I: Autonomous System Architecture

The foundational competency for building robust, scalable agentic AI systems. Orchestration is where everything begins—without mastering how agents maintain state, collaborate, and coordinate across complex workflows, every other skill in the framework becomes impossible to implement effectively.

The Foundation of Agentic AI

When we talk about orchestration in the context of agentic AI, we're addressing the most fundamental question any AI architect must answer: How do you coordinate multiple intelligent agents to accomplish complex tasks reliably?

This isn't a theoretical concern. Every production agentic system—from customer service platforms handling thousands of simultaneous conversations to financial analysis systems processing real-time market data—depends on robust orchestration. The difference between a demo that impresses in a conference room and a system that runs reliably in production often comes down to how well orchestration was designed.

Unlike traditional software where state transitions are deterministic, agentic systems must manage probabilistic state transitions. The next state depends on LLM outputs that may vary between runs, even with identical inputs. This fundamental non-determinism changes everything about how we design workflows.

The most successful AI architects master these principles—not specific framework APIs—because tools change but fundamentals endure. LangGraph may be popular today, but the concepts of state machines, message passing, and coordination patterns have been refined over decades of distributed systems research.

State Management Architectures

The first core competency within orchestration is understanding how agents maintain and transition between states. There are three primary architectural approaches, each with distinct advantages.

Stateful Graph Architectures

The core principle here is representing workflows as explicit state machines. Technologies like LangGraph's StateGraph implement this pattern, providing determinism, auditability, and fault tolerance through checkpointing.

In a stateful graph, each node represents a distinct state, and edges represent valid transitions. The system explicitly tracks the current state, the history of states, and the conditions that trigger transitions. This approach is particularly valuable in regulated industries—financial services, healthcare, legal—where you need complete audit trails showing exactly how the system reached each decision.

The key benefit is predictability. When something goes wrong, you can examine the state graph, identify exactly where the failure occurred, and understand the sequence of events that led to it. Checkpointing allows you to resume from the last known good state rather than starting over.

Event-Driven State Management

This approach treats state changes as immutable events in a log. Technologies like Kafka, Redis Streams, and event sourcing patterns from LlamaIndex AgentWorkflow implement this paradigm.

Rather than modifying state in place, every change is recorded as a new event. The current state is derived by replaying the event log. This provides temporal replay—you can reconstruct the system's state at any point in history. It also enables distributed processing, as events can be consumed by multiple systems independently.

Event-driven architectures excel at enterprise integration scenarios where agents must interact with existing message-based infrastructure. They're also invaluable for debugging, as you can replay the exact sequence of events that led to any outcome.

Context-Based State Management

The third approach treats state as first-class objects with dependency injection. Pydantic AI's context objects exemplify this pattern, providing excellent testability and modularity.

In this model, state is passed explicitly through the system rather than maintained in a central location. Each agent or function receives the context it needs and produces updated context as output. This makes testing straightforward—you can inject mock contexts to verify behavior under specific conditions.

Context-based approaches work particularly well in microservices architectures where explicit state management is already the norm, and in testing-heavy environments where the ability to isolate and test individual components is paramount.

Control Flow Patterns

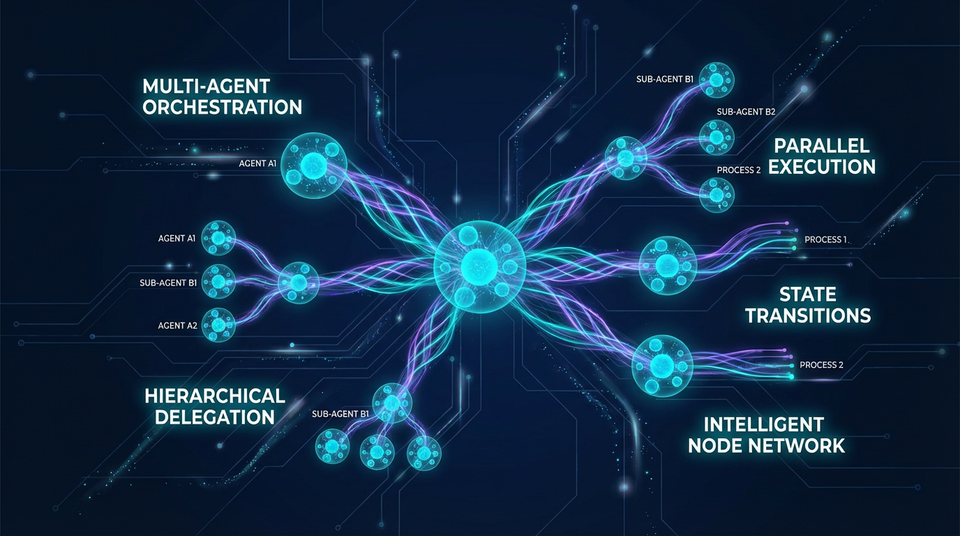

The second core competency is understanding how agents collaborate and coordinate. Different tasks require different coordination patterns, and choosing the wrong one creates bottlenecks, failures, and scaling problems.

Sequential Pipelines

The simplest pattern is linear execution: Agent A completes its work, passes results to Agent B, which passes to Agent C, and so on. This pattern is appropriate when each step genuinely depends on the previous step's output.

Sequential pipelines work well for document processing workflows—extract text, analyze content, generate summary, format output. They're predictable and easy to debug. The tradeoff is that they don't parallelize, so total execution time is the sum of all steps.

Parallel Execution and Fan-Out/Fan-In

When you need to process multiple independent tasks, fan-out/fan-in patterns decompose work across parallel workers. A manager agent divides the task, multiple workers execute simultaneously, and an aggregator combines results.

This pattern dramatically improves throughput for batch operations. If you need to analyze 100 documents, running them in parallel through 10 workers is roughly 10 times faster than sequential processing. The complexity comes in the aggregation step—combining partial results into coherent output requires careful design.

Hierarchical Delegation

Complex enterprise workflows often require multiple levels of coordination. Executive agents make high-level decisions and delegate to manager agents, which coordinate worker agents handling specific tasks.

This mirrors organizational structures for good reason—it provides clear responsibility boundaries and enables specialists to focus on their domains. The risk is over-engineering simple tasks with unnecessary hierarchy. Use hierarchical patterns when genuine specialization and coordination complexity justify the overhead.

Dynamic and Adaptive Topologies

The most sophisticated approach uses meta-orchestrators that select coordination patterns at runtime based on task characteristics. A system might use sequential processing for simple requests, parallel execution for batch operations, and hierarchical delegation for complex multi-stage workflows.

This adaptability comes with complexity—the meta-orchestrator itself becomes a critical component that must correctly assess tasks and select appropriate patterns. But for systems handling diverse workloads, dynamic topologies provide flexibility that fixed patterns cannot match.

Inter-Agent Communication Protocols

The third core competency addresses how agents exchange information and control. The choice of communication pattern has profound implications for latency, scalability, and fault tolerance.

Synchronous Request-Response

When Agent A calls Agent B and waits for a response, you have synchronous communication. This is simple to reason about and appropriate for real-time interactions where latency is critical. Chat applications, interactive assistants, and user-facing systems often use synchronous patterns.

The limitation is that the caller is blocked while waiting. If Agent B is slow or fails, Agent A cannot make progress. This creates coupling that can cascade through the system.

Asynchronous Message Passing

Non-blocking communication through message queues decouples agents entirely. Agent A sends a message and continues working. Agent B processes the message when ready. This pattern scales well and handles failures gracefully—messages can be retried, queued, or rerouted.

Long-running tasks, background processing, and systems requiring high throughput typically use asynchronous patterns. The tradeoff is complexity in tracking task completion and handling eventual consistency.

Shared Memory and Blackboard Architectures

Some problems require multiple agents to collaborate on shared state. Blackboard architectures provide a common space where agents read current state, contribute their analysis, and build toward collective solutions.

This pattern works well for complex problem-solving where different specialists contribute partial solutions. However, it requires careful coordination to prevent conflicts and ensure consistency.

Handoff Mechanisms

When agents transfer control and context to other agents, explicit handoff mechanisms ensure nothing is lost in transition. This is essential for multi-stage workflows where each agent builds on previous work.

Well-designed handoffs include not just results but context about how those results were obtained, confidence levels, and any caveats the receiving agent should consider.

The Principle-Based Transformation

The agentic AI landscape is evolving rapidly. New frameworks appear regularly, each with its own APIs and conventions. If your expertise is tied to specific frameworks, you'll constantly be relearning.

The alternative is principle-based expertise. Instead of mastering LangGraph's StateGraph API, master finite state machines. Instead of learning AutoGen's GroupChatManager syntax, understand distributed coordination patterns. Instead of memorizing Semantic Kernel's plugin system, learn universal design patterns.

This transformation provides framework portability—design once, implement in any framework. It reduces vendor lock-in—switch frameworks without redesigning your architecture. It ensures transferable knowledge—skills apply across tools and languages. And it enables future-proofing—principles endure while tools evolve.

Transferable Competencies

Mastering orchestration requires proficiency in several foundational areas that extend far beyond agentic AI.

Distributed Systems knowledge is essential—concurrency, eventual consistency, fault tolerance, and the CAP theorem all apply directly to multi-agent systems.

Finite State Machines provide the formal foundation for state management—state modeling, transition logic, and determinism are core concepts.

Design Patterns from software engineering apply directly—Observer, Mediator, Blackboard, and Chain of Responsibility patterns appear throughout orchestration architectures.

Data Serialization skills ensure agents can exchange information reliably—Pydantic, JSON Schema, and type safety prevent data corruption and enable validation.

Message Queue expertise enables asynchronous communication—Kafka, RabbitMQ, and Redis Streams are common infrastructure components.

Event-Driven Architecture principles enable sophisticated state management—event sourcing, CQRS, and saga patterns solve complex coordination problems.

Common Pitfalls

Having seen many orchestration implementations fail, certain patterns emerge consistently.

Over-coupling to frameworks mixes business logic with framework APIs, making systems impossible to migrate or test independently. Keep business logic separate from orchestration infrastructure.

Ignoring non-determinism assumes LLM outputs will be consistent. They won't. Design for variability with validation, retries, and fallbacks.

Poor state schema design creates unclear or inconsistent state representations that confuse both agents and developers.

Missing validation allows invalid state transitions that should be impossible according to business rules.

Synchronous-only communication creates bottlenecks and scaling issues when asynchronous patterns would provide better throughput.

No observability leaves you unable to debug complex agent interactions. Instrument everything from day one.

Premature optimization chooses complex patterns for simple tasks. Start simple and add complexity only when needed.

Implementation Guidance

For architects, the priority is designing state schemas before choosing frameworks. Model workflows as finite state machines or event flows. Define clear agent roles and responsibilities. Specify communication patterns explicitly. Plan for failure modes and recovery. Document state transitions and decision logic.

For developers, abstract framework-specific code behind interfaces. Implement comprehensive state validation. Use type-safe data structures throughout. Add observability at every state transition. Test with non-deterministic LLM outputs—your tests should verify behavior under variability, not assume determinism.

For organizations, establish principle-based design standards. Create internal pattern libraries. Invest in distributed systems training. Build abstraction layers for framework flexibility. Conduct architecture reviews focused on principles rather than implementation details.

Looking Forward

The field is moving toward standardized protocols like A2A and MCP becoming universal communication standards. We're seeing AI-native state management where LLMs reason about state transitions directly. Self-healing systems that autonomously recover from failures are emerging. Formal verification providing mathematical proofs of agent system correctness is becoming practical. Hybrid architectures combining multiple state management paradigms offer flexibility.

The bottom line: Orchestration is the foundation upon which all other agentic AI competencies are built. Mastering these principles is not optional—it is the defining characteristic of a proficient agentic AI architect.

Next Skill: Interoperability — Building bridges between systems and protocols

Back to: The Nine Skills Framework | Learn

Subscribe to the Newsletter → for weekly insights on building AI systems that actually work.