Memory Architecture

Hybrid Memory Architectures and Knowledge Engineering

Skill 4 of 9 | Pillar II: Knowledge & Context

The cognitive foundation that transforms agents from simple question-answering systems into sophisticated knowledge workers capable of complex reasoning over vast information landscapes.

Beyond Simple RAG

Here's what separates truly intelligent agents from glorified search engines: memory that thinks like a brain, not a filing cabinet. Most AI implementations today use basic Retrieval-Augmented Generation (RAG)—stuff documents into a vector database, retrieve the top-k similar chunks, and hope for the best. It works for demos. It falls apart in production.

Skill 4 represents the discipline of designing sophisticated memory and knowledge systems that empower intelligent agents to reason, remember, and learn. This isn't about choosing between vector databases and knowledge graphs. It's about understanding when each approach shines, how to combine them, and how to architect memory systems that mirror the elegance of human cognition.

The stakes are significant. Without proper memory architecture, your agent forgets crucial context from earlier in a conversation. It can't connect dots across different documents. It retrieves technically similar but semantically wrong information. It fails on any question requiring multi-hop reasoning. Memory architecture is the difference between an agent that truly understands and one that merely pattern-matches.

The Three Sub-Skills of Memory Architecture

| Sub-Skill | Focus Area | Key Concepts |

|---|---|---|

| 4.1 Three-Tier Memory | Cognitive model for agent memory | Episodic, semantic, and procedural memory layers |

| 4.2 Hybrid Retrieval | Combining semantic search and structured traversal | Vector embeddings, knowledge graphs, GraphRAG |

| 4.3 Retrieval Optimization | Advanced retrieval quality and efficiency | Contextual embeddings, hierarchical retrieval, hybrid fusion |

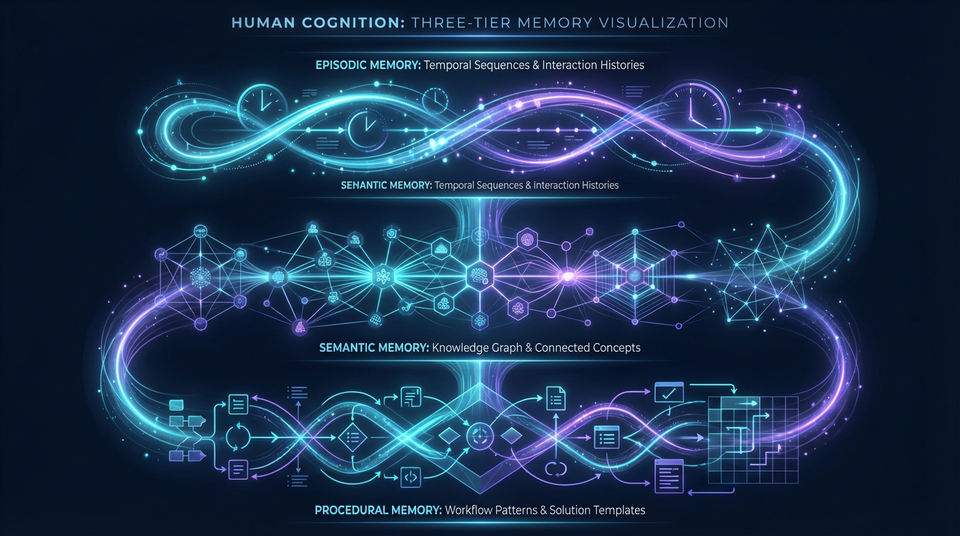

4.1 The Three-Tier Memory Architecture

Cognitive science teaches us that human memory isn't a single system—it's multiple specialized systems working in concert. The most effective agent memory architectures mirror this structure with three distinct layers, each serving a specific purpose.

Episodic Memory: What Happened When

Episodic memory captures the specific history of agent-user interactions with temporal and contextual indexing. Think of it as the agent's autobiographical memory—not just what was discussed, but when, with whom, and in what context.

Modern implementations use temporal knowledge graphs that can answer queries like "What did the user ask about last Tuesday?" or "What was the outcome of the project review meeting?" Systems like Zep and Graphiti specialize in this layer, providing time-aware indexing by user, entity, and session.

Why it matters: Without episodic memory, every conversation starts from scratch. The agent can't build on previous interactions, can't remember user preferences, and can't maintain continuity across sessions. For enterprise applications where relationships span months or years, this is fatal.

Use cases include:

- Multi-session conversations that build on previous context

- Personalized experiences that remember user preferences

- Debugging and auditing interaction histories

- Continuity across support tickets and customer interactions

Semantic Memory: What the Agent Knows

Semantic memory represents the agent's general knowledge base—facts, policies, procedures, and domain expertise. Unlike episodic memory (which remembers specific events), semantic memory stores generalized knowledge that applies across contexts.

This layer requires hybrid approaches supporting both semantic similarity search (using vector embeddings) and structured queries (using knowledge graphs). Microsoft Research's GraphRAG methodology exemplifies this hybrid approach, enabling both "vibes-based" questions ("Find documents similar to this") and precise multi-hop reasoning ("How does the delay in Project Apollo impact the Q3 budget that Sarah approved?").

The power of semantic memory: It's the difference between an agent that can only answer questions about information it was directly given versus one that can reason across its knowledge base, connecting dots that weren't explicitly connected.

Use cases include:

- Enterprise knowledge bases spanning thousands of documents

- Policy retrieval and compliance verification

- Domain expertise and specialized knowledge

- Complex reasoning requiring information synthesis

Procedural Memory: How to Do Things

Procedural memory captures the agent's learned skills—successful problem-solving patterns, proven workflows, and effective solutions. When an agent encounters a familiar problem type, it can retrieve and adapt a proven approach rather than reasoning from scratch.

Implementation patterns include prompt templates for common tasks, few-shot examples that demonstrate effective approaches, and cached execution plans that can be adapted to new contexts. This connects directly to Skill 5's concept of agentic plan caching.

Why it matters: Without procedural memory, agents reinvent the wheel constantly. They solve the same types of problems differently each time, leading to inconsistency, inefficiency, and quality variance. Procedural memory enables agents to build on what works.

Use cases include:

- Workflow automation with proven patterns

- Best practice retrieval for common scenarios

- Solution reuse across similar problems

- Consistent quality through standardized approaches

4.2 Hybrid Retrieval: Vector + Graph

The most powerful knowledge systems combine two complementary retrieval paradigms: vector search for breadth and graph traversal for depth. Understanding when to use each—and how to combine them—is core to Skill 4 mastery.

Vector Search: Semantic Similarity at Scale

Vector embeddings capture semantic meaning in high-dimensional space, enabling agents to find relevant information even when exact keywords don't match. A query about "employee compensation" can match documents discussing "salary structure" or "pay bands" without those exact terms appearing.

The technical foundation involves dense embeddings from models like text-embedding-3 or Cohere's embedding models, stored in vector databases like Weaviate, Pinecone, or Qdrant. Approximate nearest neighbor (ANN) algorithms—HNSW, IVF, and others—enable sub-second search across millions of vectors.

Vector search excels at:

- Finding semantically similar content regardless of terminology

- Exploratory queries where the user doesn't know exact keywords

- Fuzzy matching across unstructured documents

- Breadth-first exploration of knowledge bases

But vector search struggles with:

- Multi-hop reasoning ("Find projects affected by suppliers in Region X")

- Precise relationship queries ("Who reports to whom?")

- Temporal queries ("What changed since last quarter?")

- Questions requiring structured traversal

Graph Traversal: Following Relationships

Knowledge graphs capture explicit relationships between entities, enabling structured traversal and multi-hop reasoning that vector search simply cannot support. When a query requires following relationships—dependencies, hierarchies, causal chains—graphs are essential.

Graph databases like Neo4j and MemGraph provide powerful query languages (Cypher, SPARQL) for relationship-based retrieval. Path-finding algorithms, centrality measures, and subgraph matching enable sophisticated analysis.

Graph traversal excels at:

- Multi-hop reasoning across relationship chains

- Dependency and impact analysis

- Hierarchical navigation (org charts, taxonomies)

- Root cause analysis following causal links

But graph traversal struggles with:

- Fuzzy semantic matching

- Unstructured content without clear entities

- Exploratory queries without clear starting points

The Hybrid Solution: Best of Both Worlds

The most effective systems combine both paradigms. A typical pattern:

- Vector search identifies semantically relevant entry points

- Graph traversal expands from those entry points following relationships

- Fusion strategies combine results for final ranking

Microsoft's GraphRAG implements this pattern elegantly, using community detection to cluster related entities and pre-generate summaries that enable both local (vector) and global (graph) query patterns.

4.3 Contextual Embeddings and Retrieval Optimization

Raw retrieval is often mediocre. The techniques in this section transform retrieval from "good enough" to "production-grade."

Contextual Embeddings: Context Matters

A critical insight from recent research: embedding quality matters more than model size, and the best embeddings include context. When you embed a document chunk in isolation, you lose crucial information about where that chunk sits in the larger document.

The solution: contextual embeddings. Before embedding a chunk, prepend it with document-level context—the document summary, section header, or both. The embedding then captures the chunk's meaning in context, dramatically improving retrieval accuracy.

Implementation pattern:

embed(f"{document_summary}\n\n{section_header}\n\n{chunk_text}")

This simple technique can improve retrieval precision by 20-30% with no additional inference cost.

Hierarchical Retrieval: Coarse to Fine

Rather than searching the entire knowledge base for every query, hierarchical retrieval implements a "check the drawer label before searching the folders" approach:

- Domain Selection: Which knowledge domain is relevant?

- Document Retrieval: Within that domain, which documents matter?

- Chunk Extraction: Within those documents, which passages answer the question?

This multi-stage approach reduces latency (fewer candidates at each stage) and improves precision (early filtering removes noise).

Entity Extraction and Automated Graph Construction

Knowledge graphs are powerful, but manual construction is expensive and doesn't scale. Modern NLP pipelines can automatically extract entities and relationships from unstructured text:

- Named Entity Recognition (NER): Identifying people, organizations, locations, concepts

- Relationship Extraction: Determining how entities relate

- Coreference Resolution: Linking mentions of the same entity

This bridges the gap between unstructured documents and structured knowledge, enabling graph-based reasoning over document collections without manual annotation.

Hybrid Fusion Strategies

When both vector and graph retrieval return results, they must be intelligently combined. Common approaches:

Reciprocal Rank Fusion (RRF): Combines rankings by computing weighted sums of reciprocal ranks. Simple, effective, and parameter-light.

Score Normalization: Normalizes scores from different retrieval methods to a common scale before combining.

Learned-to-Rank: Trains a model to optimally weight different retrieval signals based on historical performance.

The key is measuring and iterating. Different fusion strategies work better for different query types and domains.

The Principle-Based Transformation

From Single-Paradigm Thinking...

- Vector-only RAG with simple similarity search

- Graph-only systems requiring manual knowledge engineering

- Flat, single-tier memory architectures

- Static embeddings without contextual enhancement

To Hybrid Memory Architecture...

- Three-tier memory inspired by cognitive science

- Hybrid vector + graph retrieval for comprehensive coverage

- Contextual embeddings and hierarchical retrieval for quality

- Automated graph construction for scalability

Transferable Competencies

Mastering hybrid memory architectures builds deep expertise in:

- Cognitive Science: Memory models, knowledge representation, cognitive architectures

- Information Retrieval: Vector search, ranking algorithms, evaluation metrics (precision, recall, NDCG)

- Graph Theory: Graph algorithms, community detection, path finding, centrality measures

- Natural Language Processing: Entity extraction, relationship extraction, coreference resolution

- Vector Databases: Embedding models, ANN algorithms, indexing strategies

- Graph Databases: Cypher, SPARQL, graph modeling, query optimization

- Embedding Models: Dense vs. sparse embeddings, fine-tuning, contextual enhancement

Common Pitfalls to Avoid

- Vector-Only Thinking: Missing the power of structured relationships and multi-hop reasoning

- Poor Chunking Strategies: Creating chunks that lose context or are inappropriately sized

- Ignoring Temporal Aspects: Not tracking when information was added or updated

- No Contextual Embeddings: Embedding chunks without surrounding context

- Flat Retrieval: Not using hierarchical or multi-stage retrieval for efficiency

- Manual Graph Construction: Not automating entity extraction and relationship building

- No Fusion Strategy: Naively combining vector and graph results

- Ignoring Scalability: Not planning for growth in knowledge base size

Implementation Guidance

For Knowledge Engineers: Design the three-tier memory architecture appropriate for your domain. Define entity schemas and relationship types for knowledge graphs. Establish chunking strategies and embedding approaches.

For Developers: Implement contextual embedding pipelines. Build hybrid retrieval systems with proper fusion. Create entity extraction pipelines for automated graph construction.

For Architects: Select appropriate vector and graph databases for your scale. Design data ingestion and indexing pipelines. Plan for scalability through sharding, replication, and caching.

Real-World Applications

Legal Document Analysis: Law firms use hybrid vector + graph systems to find precedents and analyze complex case relationships, enabling 10x faster research and discovery of non-obvious connections.

Enterprise IT Support: Three-tier memory (episodic: past tickets, semantic: knowledge base, procedural: solutions) enables 60% reduction in resolution time with consistent quality.

Financial Risk Analysis: Knowledge graphs tracking dependencies between entities, projects, and market events enable early identification of systemic risks and comprehensive impact analysis.

Scientific Research: Hybrid retrieval with citation graph traversal accelerates literature review and enables discovery of cross-domain connections.

Looking Forward

The field is evolving toward:

- Neuro-Symbolic Integration: Combining neural embeddings with symbolic reasoning for more powerful hybrid systems

- Continuous Learning: Knowledge graphs that automatically update from streaming data

- Explainable Retrieval: Systems that can explain why specific information was retrieved

- Multimodal Knowledge Graphs: Incorporating images, video, and sensor data alongside text

- Federated Knowledge: Secure, privacy-preserving knowledge sharing across organizations

Next Skill: Context Economics — Managing the most expensive resource in AI systems: context tokens.

Back to: The Nine Skills Framework | Learn

Subscribe to the Newsletter → for weekly insights on building production-ready AI systems.