Data Governance

Data Quality, Governance, and Grounding

Skill 6 of 9 | Pillar II: Knowledge & Context

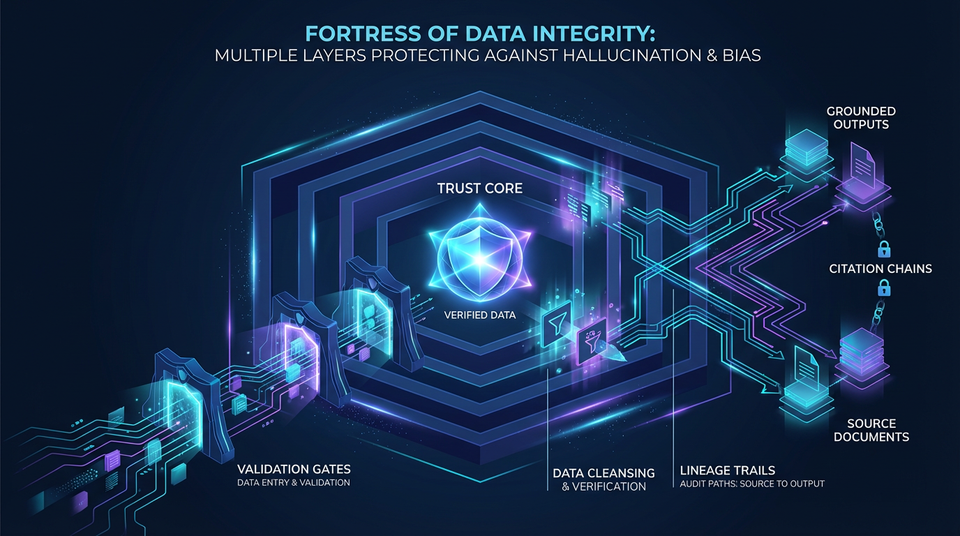

The foundational discipline that separates toy demos from production-grade agentic AI through rigorous data quality, governance, and grounding.

The Foundation Everything Else Rests On

Here's a truth that will determine whether your AI deployment succeeds or fails: data quality is the single most important factor in agent performance. Not model size. Not prompt engineering. Not the sophistication of your orchestration layer. Data quality.

The principle of "garbage in, garbage out" applies with even greater force to agentic systems than traditional software. An agent with perfect reasoning capabilities will fail—spectacularly and expensively—if grounded in inaccurate, outdated, or biased data. A brilliant agent querying a corrupted knowledge base is like a genius consultant given false information: the analysis will be impressive, but the conclusions will be wrong.

Skill 6 represents a critical addition to the Agentic AI Skills Framework, addressing what was missing from earlier frameworks focused purely on agent architecture. Research across all modern frameworks—especially data-centric ones like LlamaIndex and Haystack—reveals that organizations fail at AI not because their agents aren't smart enough, but because their data isn't trustworthy enough.

This skill encompasses three critical areas: ensuring data accuracy through rigorous quality assurance, establishing governance and traceability for compliance and trust, and grounding agent outputs in verifiable sources. Master these, and you've built the foundation that makes everything else possible.

The Three Sub-Skills of Data Governance

| Sub-Skill | Focus Area | Key Concepts |

|---|---|---|

| 6.1 Data Quality Assurance | Ensuring data accuracy, consistency, and freshness | Validation, deduplication, canonicalization, staleness management |

| 6.2 Data Governance and Lineage | Traceability, access control, and bias mitigation | Lineage tracking, RBAC, bias detection, compliance |

| 6.3 Grounding and Hallucination Prevention | Ensuring factual correctness and source attribution | Strict grounding, citation, confidence scoring |

6.1 Data Quality Assurance

Rigorous processes to ensure that data feeding agentic systems is accurate, consistent, and current. This is where production-grade AI separates from demos.

Data Validation and Schema Enforcement

Core Principle: All ingested data must conform to defined schemas and quality standards before entering your system.

For structured data, this means enforcing database constraints—type checking, foreign key validation, range checks, and referential integrity. A customer ID that should be an 8-digit integer shouldn't accept "John Smith" without throwing an error.

For unstructured data, validation is more nuanced but equally critical: detecting corrupted PDFs, identifying low-quality OCR output that might produce garbled text, checking for malformed documents, and validating file integrity. An agent working with a corrupted document is worse than an agent with no document at all—at least the latter knows it lacks information.

Technical Implementation: Use validation frameworks like Great Expectations to define data quality rules. Create expectations like "all customer IDs must be 8-digit integers" or "all document dates must be within the past 10 years." Failed validations trigger alerts or prevent data ingestion entirely. The goal is to catch problems at the gate, not after they've corrupted your agent's responses.

Deduplication and Canonicalization

Core Principle: Ensuring a single, consistent representation of each entity across data sources.

Enterprise data is messy. The same entity appears in multiple forms: "IBM", "International Business Machines", "IBM Corp.", "I.B.M." are all the same company. Without deduplication and canonicalization, your agent might treat these as four different entities, leading to inconsistent and incorrect reasoning.

Entity resolution algorithms use fuzzy matching, similarity scoring, and machine learning to identify duplicates. Canonicalization establishes a single authoritative representation for each entity—the "canonical form" that all variations map to.

Technical Approaches:

- Fuzzy string matching: Levenshtein distance, Jaro-Winkler similarity

- Phonetic algorithms: Soundex, Metaphone for name matching

- ML-based resolution: Trained models that learn entity equivalence

- Knowledge graph linking: Connecting to authoritative entity databases

Freshness and Staleness Management

Core Principle: Tracking data currency and refreshing outdated information.

Data becomes stale. A document from 2020 about COVID-19 policies is no longer relevant in 2026. An agent that doesn't know this will confidently cite outdated guidelines, potentially causing real harm.

The system must track data freshness—when was this data last updated?—and implement automatic refresh mechanisms for critical data sources. Agents should be alerted when working with potentially outdated information, and in some cases, should refuse to use stale data entirely.

Implementation Patterns:

- Timestamp tracking: Record when each piece of data was created and last updated

- TTL (time-to-live) policies: Automatically expire data after a defined period

- Scheduled refresh jobs: Periodically re-ingest data from authoritative sources

- Staleness scoring: Calculate how "stale" data is based on domain-specific rules

- Temporal validity windows: Define the period during which data is considered valid

6.2 Data Governance and Lineage

Establishing policies and systems for data traceability, access control, and fairness. This is where compliance meets capability.

Data Lineage Tracking

Core Principle: Every piece of information must be traceable back to its source.

In regulated industries, it's not enough for an agent to produce a correct answer—it must also explain where the information came from. When a healthcare agent recommends a treatment protocol, regulators and clinicians need to know: Which guidelines? From which organization? Published when?

Data lineage systems track the provenance of every data element, creating audit trails that show the complete journey: source document → extraction process → transformation → storage → retrieval → agent output.

Technical Implementation: Lineage graphs (directed acyclic graphs showing data flow), metadata tagging, provenance tracking systems like Apache Atlas and OpenLineage, immutable audit logs that cannot be altered after the fact.

Compliance Applications:

- GDPR: Right to explanation—users can demand to know how decisions were made

- HIPAA: Healthcare data tracking—complete audit trails for PHI

- SOC2: Audit trails for security compliance

- Financial regulations: Source attribution for investment advice

Access Control and Data Segmentation

Core Principle: Different users and agents should access different data based on roles and permissions.

Not all data should be accessible to all agents. A customer service agent shouldn't have access to employee salary data. A general-purpose assistant shouldn't be able to query the CEO's private documents.

Fine-grained access control implements:

- Role-based access (RBAC): Permissions tied to job function

- Attribute-based access (ABAC): Permissions based on user and resource attributes

- Data classification levels: Public, internal, confidential, restricted

Multi-tenant systems must ensure complete data isolation between tenants. A customer's agent should never see another customer's data, even accidentally.

Technical Controls: Identity and access management (IAM), row-level security in databases, column-level encryption for sensitive fields, data masking for non-essential exposure, tokenization for sensitive identifiers.

Bias Detection and Mitigation

Core Principle: Identifying and correcting biases in training and enterprise data.

Training data and enterprise data often contain historical biases—gender, race, age, socioeconomic status. If not addressed, agents will perpetuate and amplify these biases. A hiring agent trained on historical hiring data will learn to discriminate if the historical data reflects discriminatory practices.

Bias Detection uses statistical analysis and fairness metrics:

- Demographic parity: Are outcomes equal across groups?

- Equalized odds: Are error rates equal across groups?

- Equal opportunity: Are true positive rates equal across groups?

- Calibration: Are predictions equally accurate across groups?

Bias Mitigation techniques include:

- Reweighting: Adjusting sample weights to balance representation

- Resampling: Over/under-sampling to balance datasets

- Adversarial debiasing: Training models to be blind to protected attributes

- Fairness constraints: Enforcing fairness during optimization

6.3 Grounding and Hallucination Prevention

Techniques to ensure agent outputs are factually correct and tied to verifiable sources. This is the difference between a trustworthy assistant and a confident fabricator.

Strict Grounding Requirements

Core Principle: Agents should only use information from retrieved documents or tool outputs, never relying on parametric knowledge for factual claims.

LLMs have parametric knowledge baked into their weights, but this knowledge can be outdated, incorrect, or hallucinated. A model might "know" facts that were never true, or confidently state information that changed after its training cutoff.

Strict grounding configures agents to base all factual claims on retrieved information. This is implemented through:

- Prompt engineering: "Only use information from the provided documents. Do not rely on prior knowledge."

- Validation: Checking that claims are supported by retrieved context

- Rejection: Refusing to answer when retrieved context doesn't support the query

When an agent says "According to the Q4 financial report...", there should actually be a Q4 financial report in context that says what the agent claims.

Citation and Attribution

Core Principle: Require agents to cite sources for all factual claims.

Every factual claim should include a citation to the source document, enabling users to verify information. Citations should include:

- Document title

- Section or page number

- Timestamp (when was this written?)

- Direct quote or paraphrase indicator

This builds trust and enables fact-checking. Users can verify that the agent isn't hallucinating by clicking through to the source.

Implementation Patterns:

- Inline citations: "[According to the 2025 Policy Manual, Section 3.2...]"

- Footnote-style references: Numbered references with a bibliography

- Clickable links: Direct links to source documents

- Citation quality assessment: Verifying that citations actually support claims

Confidence Scoring and Uncertainty Quantification

Core Principle: Agents should express uncertainty and refuse to answer when confidence is low.

Rather than hallucinating when uncertain, agents should quantify their confidence and refuse to answer when it falls below a threshold. "I don't know" is a valid and valuable response.

Implementation Approaches:

- Confidence scores: 0-1 probability for each answer

- Uncertainty bands: "The value is between X and Y"

- Explicit refusal: "I don't have enough information to answer this question"

- Escalation: Requesting human clarification or expert review

- Threshold enforcement: Refusing to provide answers below a confidence threshold

Real-World Scenarios: Failures and Successes

Failure: Healthcare AI Hallucination

Scenario: Medical diagnosis agent hallucinates treatment recommendations not supported by medical literature.

Impact: Patient harm, legal liability, loss of trust in AI-assisted medicine.

Root Cause: No strict grounding requirements, no citation enforcement, parametric knowledge allowed for medical claims.

Mitigation: Implement RAG with strict grounding to medical literature databases. Require inline citations for all treatment recommendations. Implement confidence thresholds that escalate low-confidence cases to human physicians.

Failure: Hiring AI Bias

Scenario: Resume screening agent discriminates against women and minorities, perpetuating historical hiring biases.

Impact: Legal action, reputational damage, regulatory fines, perpetuation of workplace inequality.

Root Cause: Biased training data reflecting historical discrimination, no fairness monitoring, no bias detection.

Mitigation: Bias detection in training data, fairness constraints during model training, diverse training data, regular audits of hiring outcomes, human oversight for final decisions.

Success: Financial Services Compliance

Scenario: Investment advisory agent with complete data lineage and audit trails.

Implementation: Apache Atlas for lineage tracking, Great Expectations for validation, strict access control with RBAC, citation requirements for all investment recommendations.

Outcome: Passed regulatory audits with zero compliance violations. Complete audit trail for every recommendation. Customer trust increased. Competitive advantage in regulated markets.

The Principle-Based Transformation

From Ad-Hoc Data Management...

- Ingesting data without validation

- No deduplication or entity resolution

- Ignoring data freshness and staleness

- No lineage tracking or audit trails

- Allowing agents to hallucinate freely

To Systematic Data Governance...

- Understanding data quality dimensions (accuracy, completeness, consistency, timeliness)

- Mastering governance frameworks and compliance requirements

- Applying rigorous validation and quality assurance

- Implementing strict grounding and hallucination prevention

Transferable Competencies

Mastering data governance builds expertise in:

- Data Engineering: ETL pipelines, data validation, quality assurance

- Data Governance: Lineage tracking, access control, compliance frameworks

- Information Quality: Accuracy, completeness, consistency, timeliness dimensions

- Entity Resolution: Fuzzy matching, deduplication, canonicalization

- Fairness and Ethics: Bias detection, fairness metrics, mitigation techniques

- Regulatory Compliance: GDPR, HIPAA, SOC2, PCI-DSS, industry standards

Common Pitfalls to Avoid

- No validation: Ingesting data without quality checks leads to garbage outputs

- Ignoring duplicates: Multiple representations of entities cause inconsistency

- Stale data: Using outdated information leads to incorrect decisions

- No lineage tracking: Cannot explain where information came from

- Weak access control: Data leakage and privacy violations

- Ignoring bias: Perpetuating and amplifying historical biases

- Allowing hallucinations: Not enforcing strict grounding requirements

- No citations: Users cannot verify factual claims

- Overconfidence: Agents don't express uncertainty when appropriate

Implementation Guidance

For Data Engineers: Implement data validation pipelines with Great Expectations. Build deduplication and entity resolution systems. Create data freshness tracking and automatic refresh mechanisms. Establish data lineage tracking with Apache Atlas or OpenLineage.

For Architects: Design data quality assurance architecture. Plan governance and compliance strategy. Define data classification levels and access policies. Specify grounding requirements and hallucination prevention mechanisms.

For Compliance Officers: Map regulatory requirements to technical controls. Define audit trail and lineage requirements. Establish data retention and deletion policies. Create bias monitoring and fairness reporting. Conduct regular compliance audits.

Looking Forward

The field is evolving toward:

- AI-Powered Data Quality: LLMs that automatically detect and correct data quality issues

- Self-Healing Data Pipelines: Systems that automatically remediate data quality problems

- Explainable Lineage: Natural language explanations of data provenance

- Federated Learning for Fairness: Learning fair models from distributed data without centralization

- Continuous Compliance: Real-time compliance monitoring and automated reporting

Next Skill: Identity Management — Managing the unique identities of non-human agents.

Back to: The Nine Skills Framework | Learn

Subscribe to the Newsletter → for weekly insights on building production-ready AI systems.